Security News

JSR Working Group Kicks Off with Ambitious Roadmap and Plans for Open Governance

At its inaugural meeting, the JSR Working Group outlined plans for an open governance model and a roadmap to enhance JavaScript package management.

_I'm transitioning to a full-time open source career. Your support would be greatly appreciated 🙌_ <source media="(prefers-color-scheme: dark)" srcset="https://polar.sh/embed/tiers.svg?org=tinyli

The tinybench npm package is a lightweight benchmarking tool for JavaScript. It allows developers to measure the performance of their code by running benchmarks and comparing the execution times of different code snippets.

Basic Benchmarking

This feature allows you to create a basic benchmark test. You can add multiple tests to the benchmark and run them to measure their performance.

const { Bench } = require('tinybench');

const bench = new Bench();

bench.add('Example Test', () => {

// Code to benchmark

for (let i = 0; i < 1000; i++) {}

});

bench.run().then(() => {

console.log(bench.table());

});Asynchronous Benchmarking

This feature allows you to benchmark asynchronous code. You can add tests that return promises and the benchmark will wait for them to resolve before measuring their performance.

const { Bench } = require('tinybench');

const bench = new Bench();

bench.add('Async Test', async () => {

// Asynchronous code to benchmark

await new Promise(resolve => setTimeout(resolve, 1000));

});

bench.run().then(() => {

console.log(bench.table());

});Customizing Benchmark Options

This feature allows you to customize the benchmark options such as the total time to run the benchmark and the number of iterations. This can help in fine-tuning the benchmarking process.

const { Bench } = require('tinybench');

const bench = new Bench({ time: 2000, iterations: 10 });

bench.add('Custom Options Test', () => {

// Code to benchmark

for (let i = 0; i < 1000; i++) {}

});

bench.run().then(() => {

console.log(bench.table());

});The 'benchmark' package is a popular benchmarking library for JavaScript. It provides a robust API for measuring the performance of code snippets. Compared to tinybench, 'benchmark' offers more advanced features and a more comprehensive API, but it is also larger in size.

The 'perf_hooks' module is a built-in Node.js module that provides an API for measuring performance. It is more low-level compared to tinybench and requires more manual setup, but it is very powerful and flexible for detailed performance analysis.

The 'benny' package is another benchmarking tool for JavaScript. It focuses on simplicity and ease of use, similar to tinybench. However, 'benny' provides a more modern API and better integration with modern JavaScript features like async/await.

I'm transitioning to a full-time open source career. Your support would be greatly appreciated 🙌

Benchmark your code easily with Tinybench, a simple, tiny and light-weight 7KB (2KB minified and gzipped)

benchmarking library!

You can run your benchmarks in multiple JavaScript runtimes, Tinybench is

completely based on the Web APIs with proper timing using process.hrtime or

performance.now.

Event and EventTarget compatible eventsIn case you need more tiny libraries like tinypool or tinyspy, please consider submitting an RFC

$ npm install -D tinybench

You can start benchmarking by instantiating the Bench class and adding

benchmark tasks to it.

import { Bench } from 'tinybench';

const bench = new Bench({ time: 100 });

bench

.add('faster task', () => {

console.log('I am faster')

})

.add('slower task', async () => {

await new Promise(r => setTimeout(r, 1)) // we wait 1ms :)

console.log('I am slower')

})

.todo('unimplemented bench')

await bench.warmup(); // make results more reliable, ref: https://github.com/tinylibs/tinybench/pull/50

await bench.run();

console.table(bench.table());

// Output:

// ┌─────────┬───────────────┬──────────┬────────────────────┬───────────┬─────────┐

// │ (index) │ Task Name │ ops/sec │ Average Time (ns) │ Margin │ Samples │

// ├─────────┼───────────────┼──────────┼────────────────────┼───────────┼─────────┤

// │ 0 │ 'faster task' │ '41,621' │ 24025.791819761525 │ '±20.50%' │ 4257 │

// │ 1 │ 'slower task' │ '828' │ 1207382.7838323202 │ '±7.07%' │ 83 │

// └─────────┴───────────────┴──────────┴────────────────────┴───────────┴─────────┘

console.table(

bench.table((task) => ({'Task name': task.name}))

);

// Output:

// ┌─────────┬───────────────────────┐

// │ (index) │ Task name │

// ├─────────┼───────────────────────┤

// │ 0 │ 'unimplemented bench' │

// └─────────┴───────────────────────┘

The add method accepts a task name and a task function, so it can benchmark

it! This method returns a reference to the Bench instance, so it's possible to

use it to create an another task for that instance.

Note that the task name should always be unique in an instance, because Tinybench stores the tasks based

on their names in a Map.

Also note that tinybench does not log any result by default. You can extract the relevant stats

from bench.tasks or any other API after running the benchmark, and process them however you want.

BenchThe Benchmark instance for keeping track of the benchmark tasks and controlling them.

Options:

export type Options = {

/**

* time needed for running a benchmark task (milliseconds) @default 500

*/

time?: number;

/**

* number of times that a task should run if even the time option is finished @default 10

*/

iterations?: number;

/**

* function to get the current timestamp in milliseconds

*/

now?: () => number;

/**

* An AbortSignal for aborting the benchmark

*/

signal?: AbortSignal;

/**

* Throw if a task fails (events will not work if true)

*/

throws?: boolean;

/**

* warmup time (milliseconds) @default 100ms

*/

warmupTime?: number;

/**

* warmup iterations @default 5

*/

warmupIterations?: number;

/**

* setup function to run before each benchmark task (cycle)

*/

setup?: Hook;

/**

* teardown function to run after each benchmark task (cycle)

*/

teardown?: Hook;

};

export type Hook = (task: Task, mode: "warmup" | "run") => void | Promise<void>;

async run(): run the added tasks that were registered using the add methodasync runConcurrently(threshold: number = Infinity, mode: "bench" | "task" = "bench"): similar to the run method but runs concurrently rather than sequentially. See the Concurrency section.async warmup(): warm up the benchmark tasksasync warmupConcurrently(threshold: number = Infinity, mode: "bench" | "task" = "bench"): warm up the benchmark tasks concurrentlyreset(): reset each task and remove its resultadd(name: string, fn: Fn, opts?: FnOpts): add a benchmark task to the task map

Fn: () => any | Promise<any>FnOpts: {}: a set of optional functions run during the benchmark lifecycle that can be used to set up or tear down test data or fixtures without affecting the timing of each task

beforeAll?: () => any | Promise<any>: invoked once before iterations of fn beginbeforeEach?: () => any | Promise<any>: invoked before each time fn is executedafterEach?: () => any | Promise<any>: invoked after each time fn is executedafterAll?: () => any | Promise<any>: invoked once after all iterations of fn have finishedremove(name: string): remove a benchmark task from the task maptable(): table of the tasks resultsget results(): (TaskResult | undefined)[]: (getter) tasks results as an arrayget tasks(): Task[]: (getter) tasks as an arraygetTask(name: string): Task | undefined: get a task based on the nametodo(name: string, fn?: Fn, opts: FnOptions): add a benchmark todo to the todo mapget todos(): Task[]: (getter) tasks todos as an arrayTaskA class that represents each benchmark task in Tinybench. It keeps track of the results, name, Bench instance, the task function and the number of times the task function has been executed.

constructor(bench: Bench, name: string, fn: Fn, opts: FnOptions = {})bench: Benchname: string: task namefn: Fn: the task functionopts: FnOptions: Task optionsruns: number: the number of times the task function has been executedresult?: TaskResult: the result objectasync run(): run the current task and write the results in Task.result objectasync warmup(): warm up the current tasksetResult(result: Partial<TaskResult>): change the result object valuesreset(): reset the task to make the Task.runs a zero-value and remove the Task.result objectexport interface FnOptions {

/**

* An optional function that is run before iterations of this task begin

*/

beforeAll?: (this: Task) => void | Promise<void>;

/**

* An optional function that is run before each iteration of this task

*/

beforeEach?: (this: Task) => void | Promise<void>;

/**

* An optional function that is run after each iteration of this task

*/

afterEach?: (this: Task) => void | Promise<void>;

/**

* An optional function that is run after all iterations of this task end

*/

afterAll?: (this: Task) => void | Promise<void>;

}

TaskResultthe benchmark task result object.

export type TaskResult = {

/*

* the last error that was thrown while running the task

*/

error?: unknown;

/**

* The amount of time in milliseconds to run the benchmark task (cycle).

*/

totalTime: number;

/**

* the minimum value in the samples

*/

min: number;

/**

* the maximum value in the samples

*/

max: number;

/**

* the number of operations per second

*/

hz: number;

/**

* how long each operation takes (ms)

*/

period: number;

/**

* task samples of each task iteration time (ms)

*/

samples: number[];

/**

* samples mean/average (estimate of the population mean)

*/

mean: number;

/**

* samples variance (estimate of the population variance)

*/

variance: number;

/**

* samples standard deviation (estimate of the population standard deviation)

*/

sd: number;

/**

* standard error of the mean (a.k.a. the standard deviation of the sampling distribution of the sample mean)

*/

sem: number;

/**

* degrees of freedom

*/

df: number;

/**

* critical value of the samples

*/

critical: number;

/**

* margin of error

*/

moe: number;

/**

* relative margin of error

*/

rme: number;

/**

* p75 percentile

*/

p75: number;

/**

* p99 percentile

*/

p99: number;

/**

* p995 percentile

*/

p995: number;

/**

* p999 percentile

*/

p999: number;

};

EventsBoth the Task and Bench objects extend the EventTarget object, so you can attach listeners to different types of events

in each class instance using the universal addEventListener and

removeEventListener.

/**

* Bench events

*/

export type BenchEvents =

| "abort" // when a signal aborts

| "complete" // when running a benchmark finishes

| "error" // when the benchmark task throws

| "reset" // when the reset function gets called

| "start" // when running the benchmarks gets started

| "warmup" // when the benchmarks start getting warmed up (before start)

| "cycle" // when running each benchmark task gets done (cycle)

| "add" // when a Task gets added to the Bench

| "remove" // when a Task gets removed of the Bench

| "todo"; // when a todo Task gets added to the Bench

/**

* task events

*/

export type TaskEvents =

| "abort"

| "complete"

| "error"

| "reset"

| "start"

| "warmup"

| "cycle";

For instance:

// runs on each benchmark task's cycle

bench.addEventListener("cycle", (e) => {

const task = e.task!;

});

// runs only on this benchmark task's cycle

task.addEventListener("cycle", (e) => {

const task = e.task!;

});

BenchEventexport type BenchEvent = Event & {

task: Task | null;

};

process.hrtimeif you want more accurate results for nodejs with process.hrtime, then import

the hrtimeNow function from the library and pass it to the Bench options.

import { hrtimeNow } from 'tinybench';

It may make your benchmarks slower, check #42.

mode is set to null (default), concurrency is disabled.mode is set to 'task', each task's iterations (calls of a task function) run concurrently.mode is set to 'bench', different tasks within the bench run concurrently. Concurrent cycles.// options way (recommended)

bench.threshold = 10 // The maximum number of concurrent tasks to run. Defaults to Infinity.

bench.concurrency = "task" // The concurrency mode to determine how tasks are run.

// await bench.warmup()

await bench.run()

// standalone method way

// await bench.warmupConcurrently(10, "task")

await bench.runConcurrently(10, "task") // with runConcurrently, mode is set to 'bench' by default

| Mohammad Bagher |

|---|

| Uzlopak | poyoho |

|---|

Feel free to create issues/discussions and then PRs for the project!

Your sponsorship can make a huge difference in continuing our work in open source!

FAQs

[](https://github.com/tinylibs/tinybench/actions/workflows/test.yml) [](https://www.npmjs.com

The npm package tinybench receives a total of 4,660,668 weekly downloads. As such, tinybench popularity was classified as popular.

We found that tinybench demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 3 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

At its inaugural meeting, the JSR Working Group outlined plans for an open governance model and a roadmap to enhance JavaScript package management.

Security News

Research

An advanced npm supply chain attack is leveraging Ethereum smart contracts for decentralized, persistent malware control, evading traditional defenses.

Security News

Research

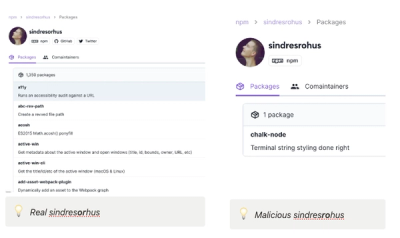

Attackers are impersonating Sindre Sorhus on npm with a fake 'chalk-node' package containing a malicious backdoor to compromise developers' projects.